Clinical Review

When genetic test reports are delayed, treatment decisions are delayed. I redesigned the workflow that determines how fast 585,000 patients per year get their results.

The clinical problem: In 2024, Natera processed a record 3,064,600 tests. 20% (585,000 reports) required manual review by genetic counselors and lab directors before reaching patients. The existing workflow was fragmented across multiple tools, creating delays that directly affected patient care timelines.

Why this matters: In prenatal genetic testing, a delayed result can mean a delayed decision about pregnancy management. In oncology, it can affect treatment timing. The review workflow isn’t just operational efficiency — it’s clinical impact.

The constraint: Design for expert users (genetic counselors, lab directors) in a regulated environment where accuracy cannot be compromised for speed. Every workflow change needed clinical validation.

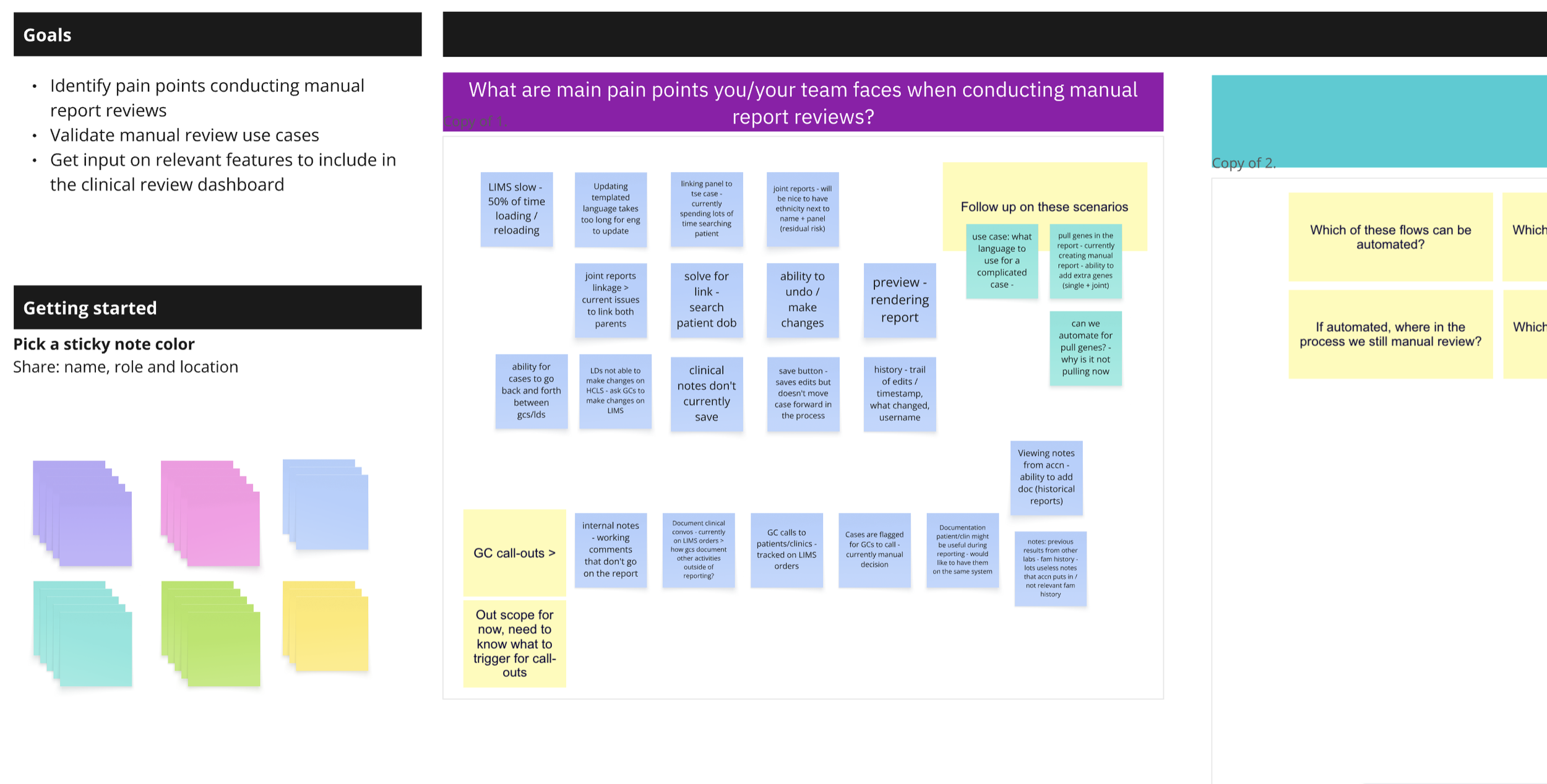

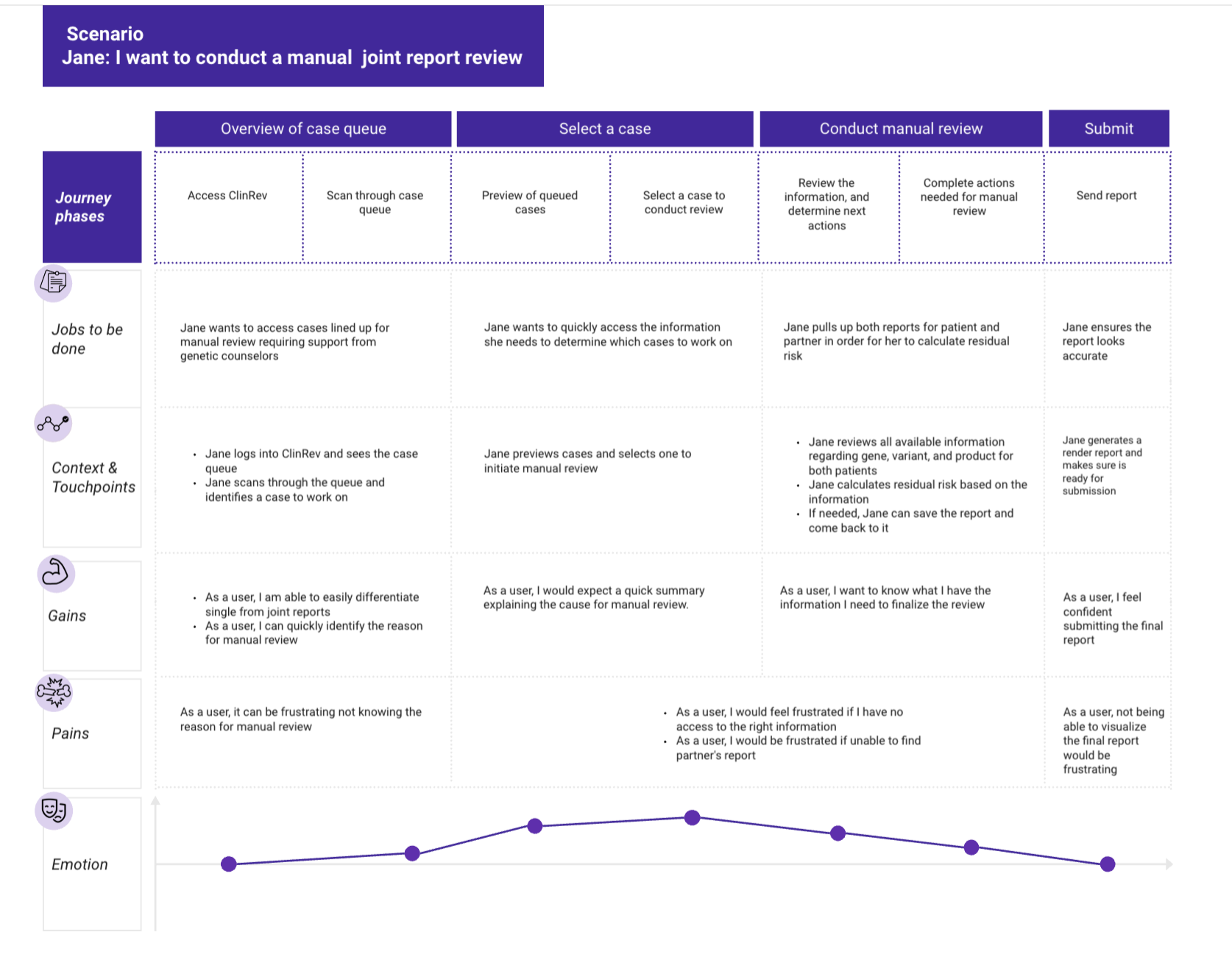

I led discovery with 15 end-users, 8 subject matter experts, and 6 department leaders across Natera’s women’s health, oncology, and organ health divisions.

Methods

- 2,000+ hours of contextual inquiry and live shadowing with genetic counselors and lab directors in their actual work environments

- Thematic analysis of interview transcripts to identify workflow pain points

- Cross-functional vision workshop to align stakeholders on goals and constraints

- Distributed cognition framework analysis to map how information flows across people, tools, and environments

Clinical workflows don’t exist in individual heads — they’re distributed across multiple people, tools, and handoffs. The framework helped me identify where cognitive load was unnecessarily high and where system design was compensating for (or creating) workflow friction.

Why distributed cognition

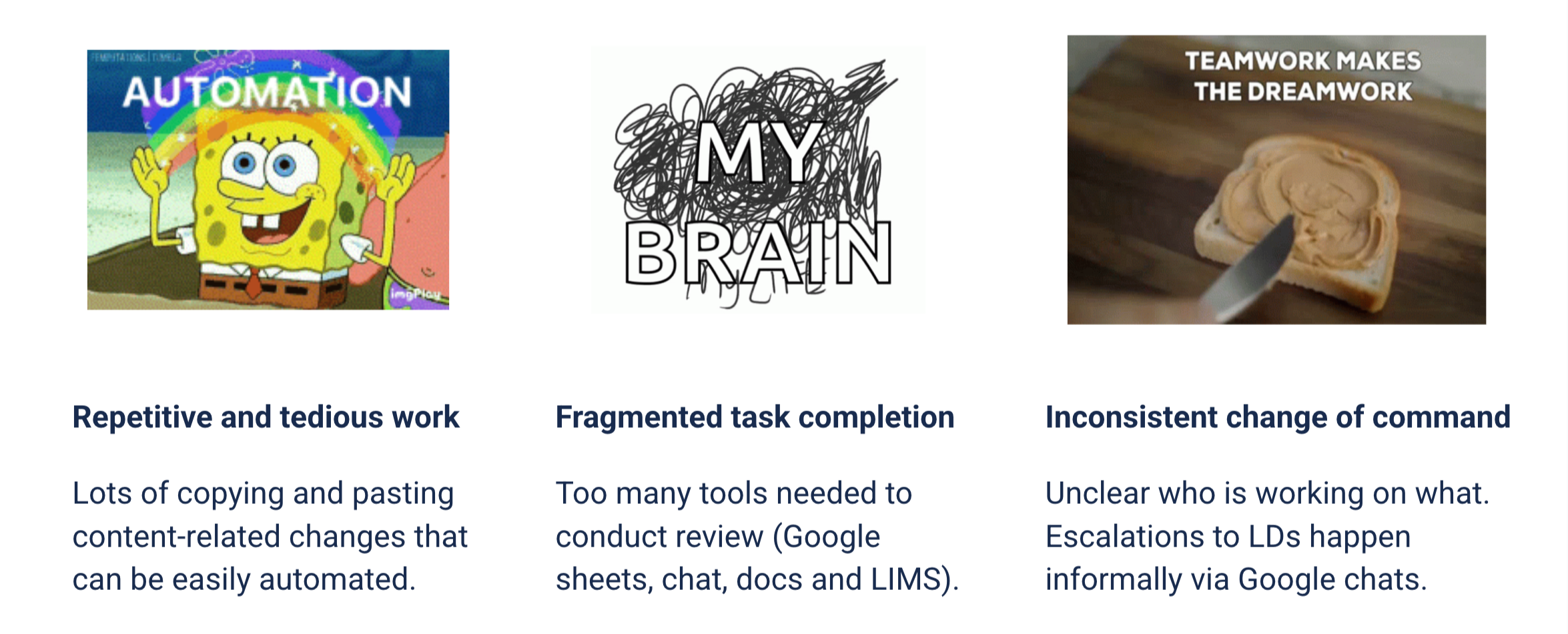

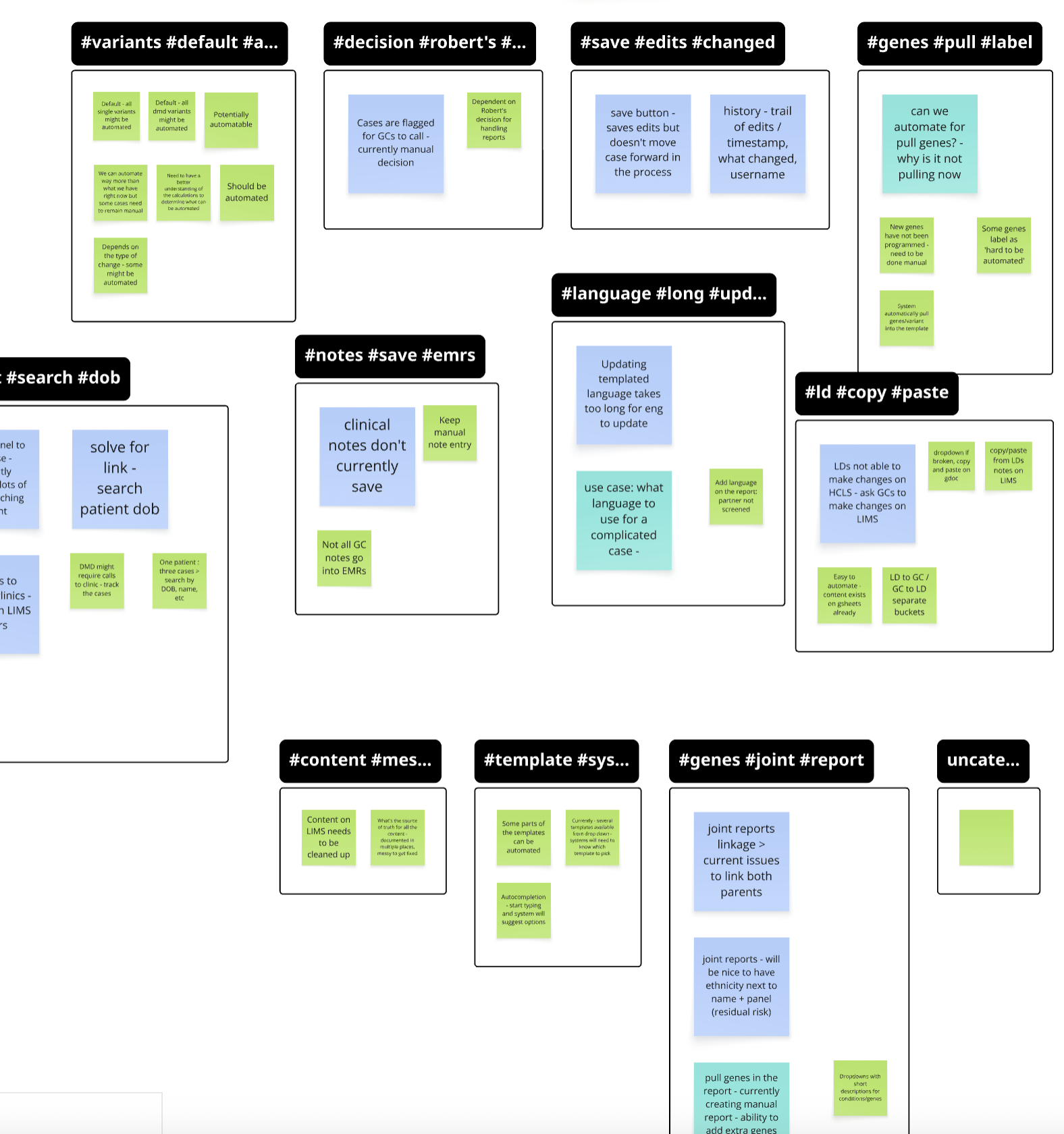

Finding 1: Underutilized expertise

Genetic counselors were spending time on repetitive administrative tasks rather than complex clinical decisions. Their domain expertise was being wasted on workflow friction.

Finding 2: Error-prone handoffs

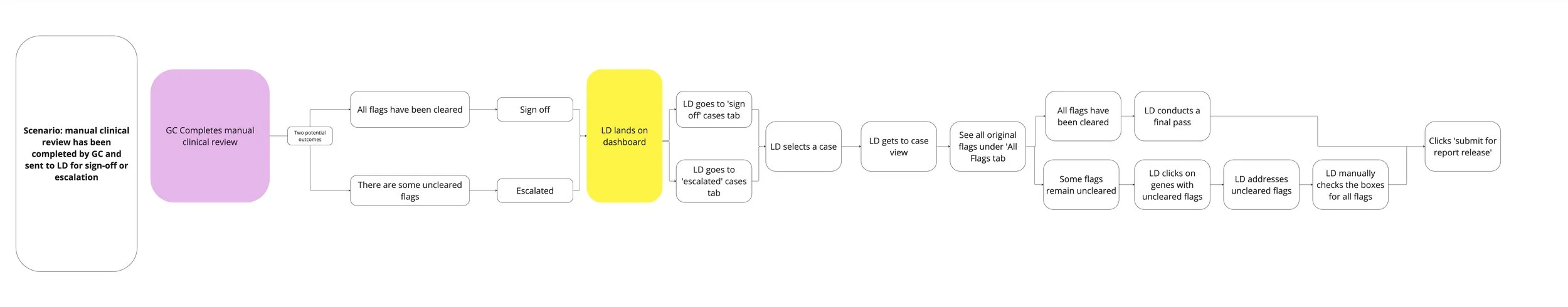

The transition point between genetic counselor review and lab director approval had no automated validation. Manual handoffs created risk of errors that could affect patient results.

Finding 3: Role confusion

Users didn’t have clear visibility into what tasks were theirs versus someone else’s. This created duplicate work and missed handoffs.

Finding 4: Context switching

Reviewers toggled between 4 different tools to complete a single review. Each context switch increased cognitive load and time to completion.

Design for the expert user’s mental model, not the system’s data model. Genetic counselors think in terms of clinical cases, not database records.

Design principle

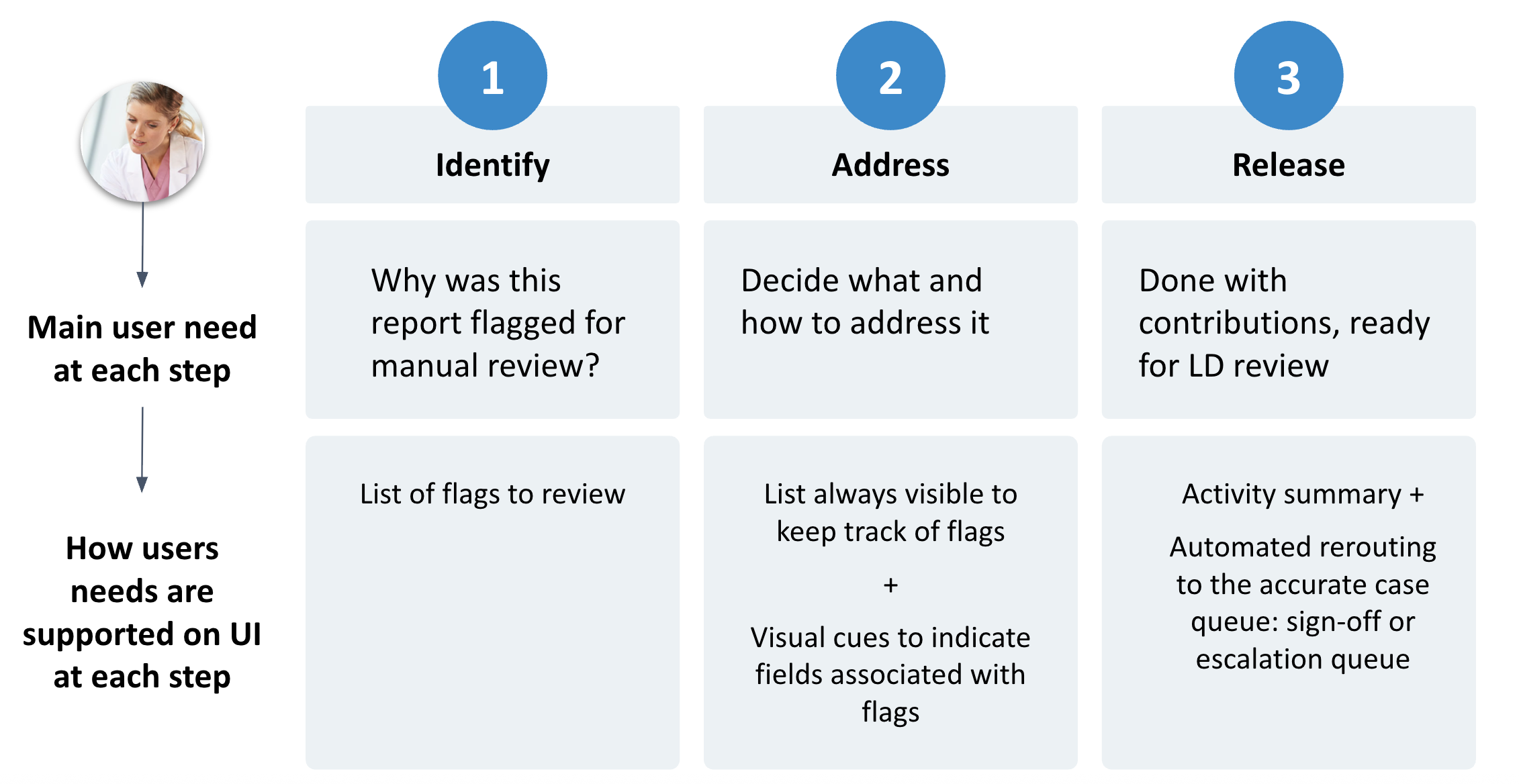

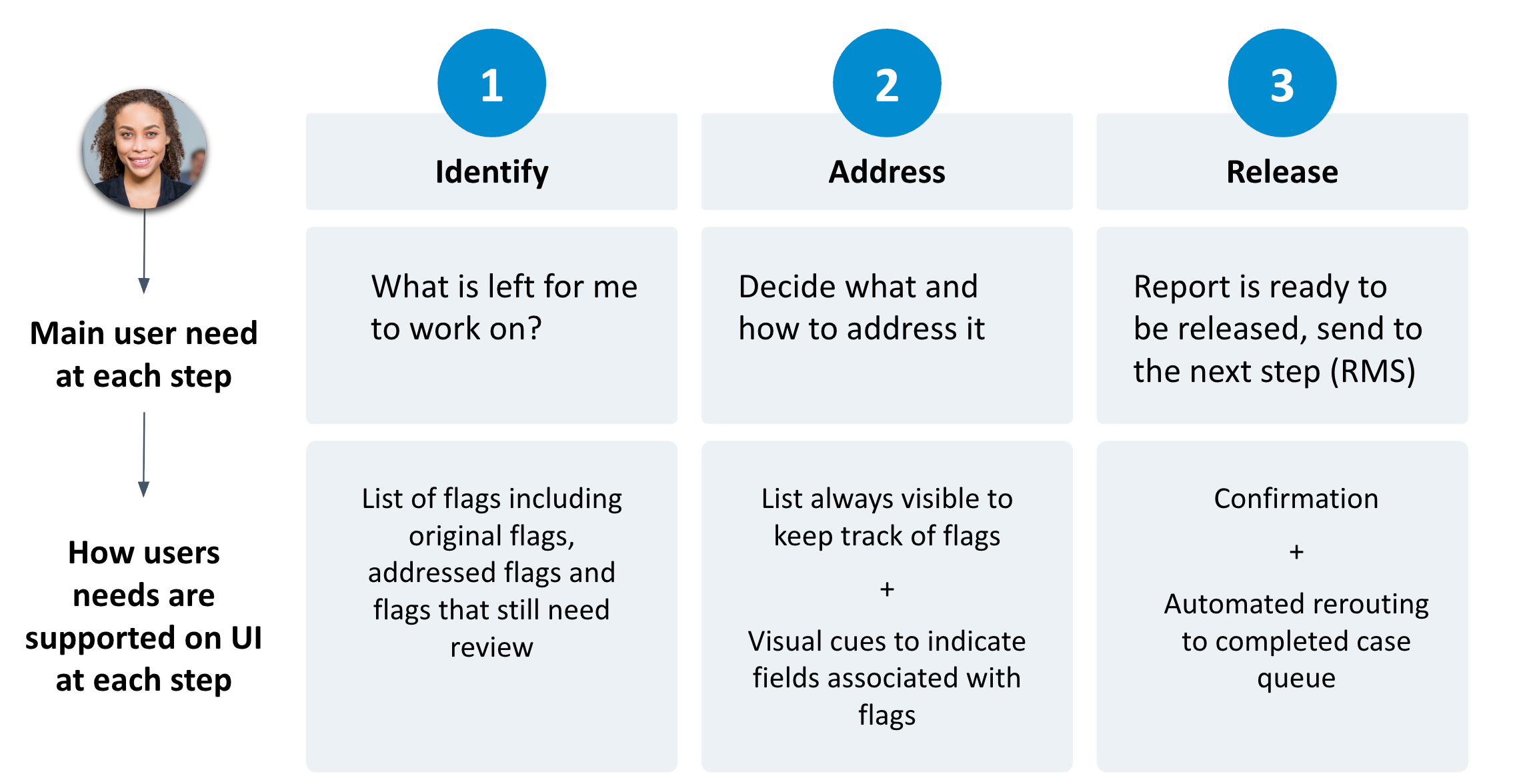

What we built: A unified review interface with role-specific queues, automated validations at handoff points, and consolidated case views that eliminated the need for multiple tools.

Key design decisions

- Queue-based workflow — cases route automatically based on type and status

- Progressive disclosure — show only information relevant to the current decision

- Built-in validations — prevent incomplete handoffs before they happen

- Role-based permissions — genetic counselors and lab directors see different views of the same case

Personas

Genetic Counselor: high-volume review, needs efficiency and accuracy

Lab Director: final approval, needs oversight and audit trail

Testing approach: Conducted iterative usability testing throughout the design process, not just at the end. Tested with actual genetic counselors and lab directors using real case examples.

What we learned: Early prototypes assumed too much knowledge of the new system. We added contextual help and status indicators that made the workflow learnable without formal training.

It feels like it was designed for how we actually work.

Genetic counselor, UAT feedback

Quantitative impact

- 50% reduction in report turnaround time

- 4 legacy systems replaced with 1 unified tool

- 585,000 reports/year processed through the new workflow

Qualitative impact

- Higher user satisfaction from counselors and lab directors

- Reduced training time for new hires

- Eliminated context-switching overhead

What this means for AI: The next iteration of this workflow will integrate AI-assisted anomaly detection to flag cases that need closer review. The design challenge will be the same one I encountered here: how do we surface AI recommendations in a way that supports clinical judgment rather than replacing it? The handoff between algorithmic confidence and human expertise is the critical design problem.

What I learned: Clinical workflows are rarely linear. The documented process and the actual process are often different, and the gap between them is where the design problems live. Shadowing users in their actual environment revealed pain points that interview data alone wouldn’t have surfaced.

What I’d do differently: I would have involved lab leadership earlier in the design process. Some of the handoff logic we built had to be reworked because we didn’t fully understand the approval hierarchy until later in the project.

Next steps: We’re extending this unified review framework to other product lines and building analytics dashboards that give leadership real-time visibility into review bottlenecks.